By Jakob Strohl ’26, staff writer

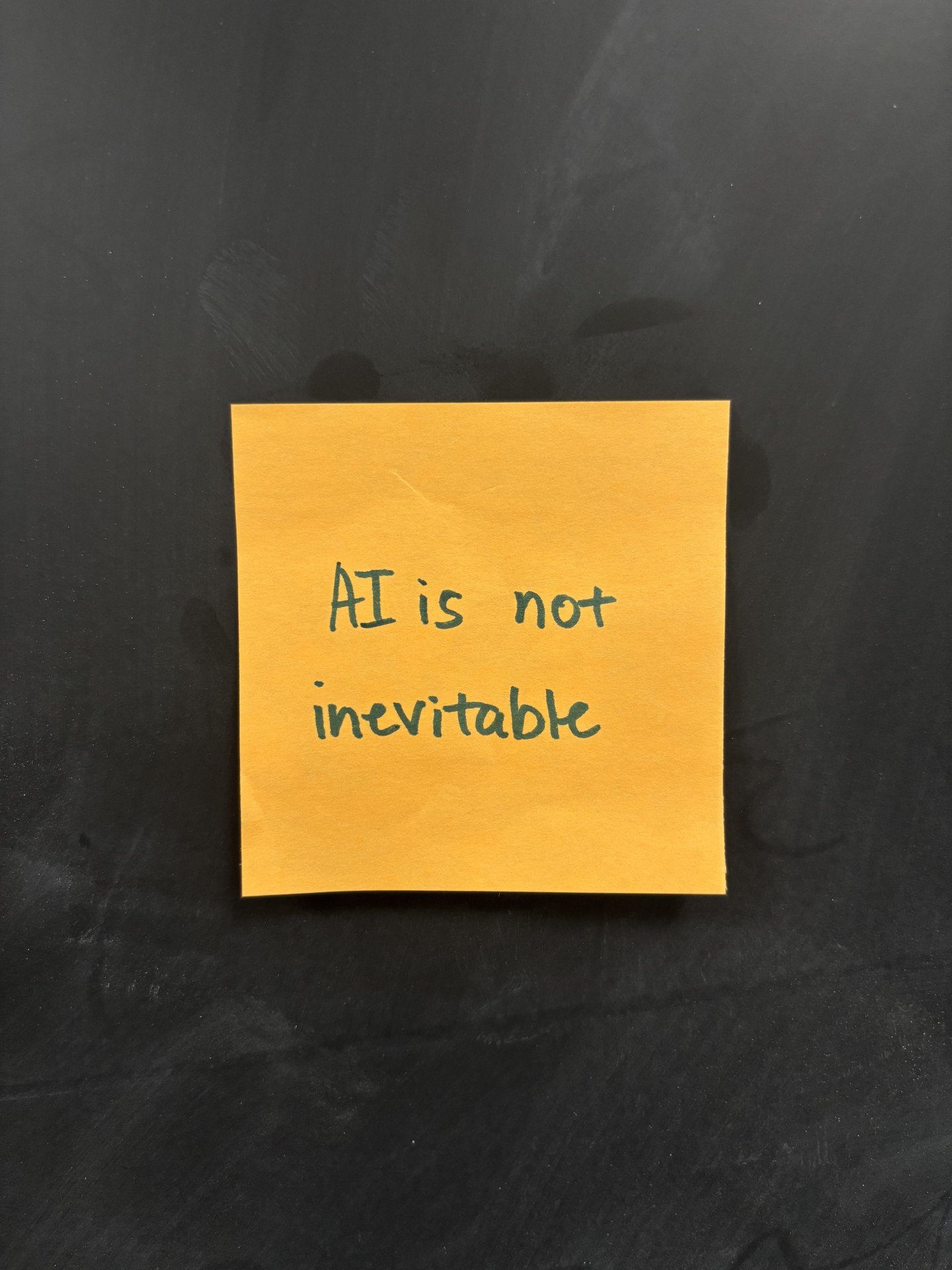

Around a week ago, students were met with a flurry of orange sticky notes as they walked into classrooms and buildings around the college. The green scrawl across all of them: “AI is not inevitable.” Though even a cursory scan of the laptop screens illuminating Mund’s living room in the evening hours reveals something shameless, rampant – at least illusorily inevitable. Students huddle around Gemini or ChatGPT – tasteful sans-serif subheadings, indented equations, and emoji-punctuated lists populate the displays – some copying down notes from the digital oracles while others submit endless trains of documents and screenshots for their consideration. It’s hard to put a finger on what exactly this scene is. To the pedant, it is most assuredly an epidemic or scourge. To the proponent, it is the dawn of a more democratic era of education. To the average student, it’s something different altogether, often something far less philosophically loaded.

“AI is a tool,” said Chaz Rokosz, a senior political science major. “Like any tool, it’s designed to be beneficial but can be misused.” His responses to an interview about LVC’s AI policy forays were incredibly blunt and reflect a position that appears to be widely held among the student body – not so much one devoid of nuance, but a more pragmatic, apathetic acknowledgement that AI simply is without any socially revelatory appeal. “As with all forms of human progress, we’ll have to see if we can find an effective way to manage its usage and mitigate the downsides… accept that it is a tool that isn’t going anywhere.”

Rokosz’s point about AI not going anywhere is one oft-echoed by artificial intelligence researchers and field experts who increasingly view the technology as one of general purpose and predict its global impact to unfold on a scale similar to that of the steam engine or electricity. And as with any general-purpose technology, there is simply no way to safely ignore the problem of legislation. Truly, it is the mandate and duty of any institution to clearly, accessibly lay down expectations and define appropriate use cases, and from the perspective of many students, that has not yet been done at Lebanon Valley with artificial intelligence. Though it most certainly can be.

In multiple casual conversations with students and faculty, two formal interviews with Rokosz and a senior in a STEM field who wishes to remain anonymous, and upon review of some available college materials online, the issue appears to be in three parts.

- There is no central, student-facing AI policy that sets uniform investigation, appeals, and punishment structures and binds a devolved system to certain predictable standards

- An institution that cannot guarantee a basic level of education on appropriate use cases for AI and literacy on the technology may end up punishing ignorance rather than malice.

- With no real guidance outside of hackneyed ‘principles,’ faculty and administrative knowledge and use vary wildly from complete illiteracy and open hostility to a near-universal acceptance and application that appears confusing at best and hypocritical/non-functional at worst.

— A policy for students —

Where it was consistently suggested that LVC is lacking compared to other institutions is in a clear academic AI policy for students. Multiple conversations have yielded similar conclusions, chief of which being that the school, in failing to delineate appropriate use, legitimates too many contradictory pedagogical philosophies. It is not necessarily the case that students crave bureaucratic efficiency and due process (as much as one might think those would be important) – it’s that students cannot get a read on whether Lebanon Valley and its administration encourages responsible use in the classroom with guardrails yet-undefined or adopts the yet-tacit posture that AI very often cannot be responsibly used and has little place in the learning process.

Deference to professors and departments is not a policy per se, as it does not make explicit the real bias of the institution – whether in the absence of a general statute, more ambient, daily AI use is assumed acceptable or not. When this cannot be gauged, due process cannot be guaranteed: Ignorant use in even menial (or spillover academic) tasks could be punished without anything more than tangential cause or may, conversely, be used by students to justify academic dishonesty by simply claiming they “didn’t know it was wrong.”

When interviewing Rokosz, the anonymous STEM major, and lightly polling others on the matter of preference in a school-wide policy – if AI should, in fact, be presumed permissible in college life when no explicit rules have been made – two main arguments crystallized and led to an interesting revelation about the utility of such a policy in influencing the campus demographic.

To start, significant common ground between Rokosz and the STEM major was found in rebuttal to the perception that AI is merely a vehicle for cheating. When asked to “estimate the percentage of students you believe use AI to gain unfair advantages on assignments,” both pegged it at the same rough 10% in their respective fields, with the STEM major saying they’d imagine the actual percentage of what would colloquially be labeled as academic dishonesty at “closer to 40-50% across the whole college,” though “mostly on small or ‘busy-work’-type assignments.”

Keenly interesting is that, while both students took clear issue with those who used AI to gain advantages at the expense of others, they recognized that ubiquitous use (what Rokosz guessed is likely over 90% of students on campus) on ‘mindless’ tasks could hardly be considered the same kind of evil. This is deeply upsetting to traditional notions of what does and does not deserve punishment, as academic integrity – something that has been held as somewhat of a sacred principle – was to be punished in principle and not in function or form. In these interviews and informal conversations, however, it was suggested that the real, necessarily punishable offenses occurring with the illicit use of artificial intelligence were those that directly cost other students relative successes, grades, and opportunities and replaced critical thinking wholesale.

“Unless there’s some kind of curve where someone else’s score affects yours, I don’t think it’s actually that big of an issue,” the STEM major said. “Overall, I think the negative aspects of AI impact the individual far more than they impact the group currently.”

In other words, simply subverting the normal learning process on low-stakes assignments or even misrepresenting one’s total ownership of “busy-work” material might not be enough for the zealous inquisition some students worry about happening in certain classrooms.

Moreover, both interviewees stressed the new, critical role AI has taken in serving populations that have long been underserved in traditional pedagogy.

“As someone with a learning disability, it’s definitely helped level the playing field,” said Rokosz. “What would take me three days to do because of tedious work – like checking grammar or citations – takes half the time. I can focus on the quality of my arguments rather than my formatting.”

The STEM major echoed this sentiment: “AI can be incredibly helpful to students who need to engage with course material differently than the typical student. I think AI can increase engagement with course material, or clarify concepts when a professor isn’t available, and these uses shouldn’t be classified as academic dishonesty.”

When prompted to define academic dishonesty, the STEM major added that it is “using AI to do work for you without getting permission from your professor… or when you claim you did work you didn’t. Using AI as a learning aid doesn’t fit either of those criteria”

That being said, among the broader student population, another opinion seemed to form that potential grade inflation due to AI use may disproportionately impact prospective graduate students, especially if those students are avoiding using AI to the same degree out of fear of academic repudiation. The STEM major noted that the above discussion on relative harm “falls out the window when looking at situations like grad school applications where GPA does actually matter.”

But this presents its own set of significant questions and difficulties. The disparity in prompt efficiency among students, lack of basic literacy, and lower opportunity cost of doing work for gen-ed courses brought by AI means that any sanctioned AI use at all in programs with varying tolerances could precipitate potentially concerning levels of grade inflation. If a steady, competitive institutional GPA is something of a common good or right, as was suggested by multiple individuals, AI policy might be formed with the same deliberation the federal reserve uses when it crafts monetary policy. In this sense, an outcome or harm-based view of proportional punishment cannot be taken to the logical extreme as a principle, and it would behoove the institution to straddle a middle ground now before policy becomes a firefight and not something crafted with care.

— Two competing views —

In deliberating on such a policy, the college would do well to bear in mind that these undercurrents in student opinions illustrate a stark divide in how they view the utility of education and LVC’s role in their future.

The subset that views a bachelor’s degree as a vetting process for workplace preparedness and sees Lebanon Valley as a kind of notary and early-career brokerage firm, allowing them access and networking into the job market, views AI in a far more favorable light than other populations. To them, the traditional wariness of misrepresentation is eclipsed by the potential for productivity and the preference of employers for AI natives. Not predispositionally accepting AI use is seen as a hindrance and penalizes advancement and adoption. This position is made extremely visible by the media, business lobbies, and a consortium of students and student organizations that want college to prepare them for success immediately outside their campus gates.

On the other hand, and far less visible, the population most inclined towards a heavily punitive policy that assumes AI’s disallowance if not otherwise stated is overwhelmingly composed of those angling for graduate degrees. To them, Lebanon Valley cannot water down its curriculum, tolerate grade inflation, or meaningfully rehabilitate proven cheaters because this directly devalues the prestige of their program and harms their ability to apply to graduate schools. The solution offered is not one of cautious integration, but alternative research opportunities.

To their point, there are other evidential forms of sustained thinking and project management that are not entirely vested in a student’s capacity to survive college for four years with no hardship or adversity, as GPA is generally a metric of. Individual capstone projects and theses are more significant indicators of these virtues in a student and are far more revealing of the critical engine behind their research than GPA is, which may likely be inflated by AI outsourcing whether the institution attempts to preserve it or not. To them, what matters is how long the college will attempt to stave that inflation off, whether they will provide alternative opportunities for mentoring and graduate signaling, and if LVC will seize the AI moment as an opportunity to gain institutional prestige.

The dissonance between these two camps suggests that any AI policy duly considered must understand that it will telegraph Lebanon Valley’s preference towards a particular viewpoint and advertise its institutional values to two disparate groups of prospective students. More critically, it could very well bring about demographic change, aligning LVC with more intensive programs and prioritizing prestige through graduate placement or incentivizing career opportunities and work placement in fields dominated by AI. Right now, until GPA becomes obsolete as a tool used to diagnose and rank the value of students, it is very difficult to balance the two views, and any policy becomes a balancing act – likely for the better part of the next decade.

— Avoiding arbitrary punishments —

Across formal interviews and informal polling, students agreed that punishment can neither be justified nor rehabilitative when there is no defined policy guiding it.

“It’s difficult to expect children to have the foresight to use AI in a constructive way when they’re already trying to navigate a difficult time in their lives…,” said the STEM major. “I think college students are old enough to make their own decisions on how they want to use AI, but they should first be educated about the potential outcomes of those decisions.”

Rokosz concurred: “Teach students ethical uses of AI rather than ban its usage entirely.”

Several floated the idea of a course that operates like an FYE and clearly outlines college expectations.

“I’d love if there was a mandatory, 1-credit seminar course that students had to take that fully outlined the uses and detriments of AI in an academic environment,” said the STEM major. “I think this would have a far more positive impact on student AI usage than simply banning it everywhere and sending a blanket message that it’s ‘bad.’”

When discussing the importance of such a course in maintaining fair, equitable treatment of AI users in the academic setting, the STEM major added that the hypothetical course is one of the only ways that an AI policy would be intelligible to students. “Very few students are going to use AI responsibly just because a college policy told them to. I think a much higher portion of students will willingly choose to follow the AI policy – and not try to skirt around it – if they’re able to fully understand the impacts of resigning their ability to learn.”

The preponderance of those asked about their vision for a schoolwide policy said some form of education and literacy should be mandated. Primarily, this would function as a safeguard against student rights violations, as it was noted by a few who chose to elaborate that the school cannot reasonably punish students if there is no reasonable expectation they understand the policy, its purpose, or even the technology the policy is discussing.

The STEM major concluded with their outlook on the future of AI in academic life. “Depending on how our education system decides to handle it, the future will either look very bright or very bleak when it comes to AI in schools. My main concern is that blanket rules will be made regarding AI usage without considering the nuances of something like an LLM.”

“I think I remain skeptically hopeful,” said Rokosz.

— Faculty and administrative use —

The last major area of concern that came up in conversations was that of faculty and administrative use. It was posed that students are currently expected to navigate many individual syllabi where faculty have been devolved broad authority to fashion policies that often outline radically different teaching philosophies. Under the college’s academic honesty policy, punishments for first time offenders are entirely organized by professors “up to and including failure in the course” with wide latitude for forgiveness.

The issue many students take with this is that classes are not discrete ecosystems where learning follows a set of laws the moment a problem set is started or a book is cracked open. When knowledge of what does and does not qualify as academic honesty is expected – copying and pasting from an academic journal is obviously unethical – the expansive jurisdiction of professors is justified, as they are often the most immediate point of contact with the offending student, are uniquely aware of the context to their actions, and have a specialized understanding of what their field expects and what their department needs.

When it comes to AI, many students believe that concepts like plagiarism and answer lookups that have always been relatively black-and-white are now far too grey to be determined at the faculty level. If semantics determine guilt, and each professor has a fundamentally different understanding of what artificial intelligence is – some going as far as to paint it as an academic antichrist or boogeyman – they ask if it is appropriate for one mercurial person to have complete control over both the definition of cheating and its punishment.

An AI honesty board comprised of students, professors, and at least one expert in AI is seen as a far more responsible option for identifying legitimate cases of cheating and proportional punishments. This plan diversifies the perspectives offered on what it means to cheat; offering input from peer-to-peer, academic, and institutional viewpoints; while being much more cognizant of both the ways students circumvent the rules and responsibly use AI to advance their studies. It also diffuses responsibility in decision-making so that no one person is implicated for bias or ignorance in a decision that is ultimately unfavorable to a student.

Regardless of policy specifics, what stood out across many conversations, and what truly rankles the student body, is that faculty and administrators are given what many view as carte-blanche to use AI in developing course materials, sending memos and emails, creating advertisements, planning events, and reviewing communications that are all directly related to the quality of student’s education and experience. The rather trite argument used to dismiss this sentiment is that students are benefitting from the efficiency, time saved, and costs cut by AI as the institution grows nimbler and more responsive to feedback and professors have more time to mentor, but functionally, students see very little benefits.

Some expressed that professors allegedly using AI inappropriately cannot be relied on for field expertise and guidance. A few have said that once a member of the faculty or staff gains the reputation of unethically using AI, they are often dismissed by the student body as not credible or borderline fraudulent. It has even been suggested that students who feel they might be called out by professors, accused of cheating, or graded poorly should save evidence of their suspected AI use in generating class materials or grading to secure equal treatment.

In recent months, the divide has deepened. As my colleague Ryan Talton reported, LVC’s Marketing and Communications department doubled down on their AI use in multiple social media posts, turning off comments when outrage from students followed over what they perceived to be lazily outsourcing work to AI that could have been given to digital communications majors as educational opportunities. Shortly after this incident, Brian Boyer, supervisor of campus safety, caused a firestorm on the anonymous social media app Yik Yak after ironically emailing students an AI generated photo in a parking update that some lauded as “hilarious” and others decried as tone-deaf and “unnecessary.”

With little to no guidance from the school in the form of a centralized policy, the buzzwords among the student body are “laziness,” “arms-race,” and “hypocrisy,” none of which have any place in discussions surrounding the college’s academic and administrative elites.

— A most brazen neo-luddism? The disorganization of LVC’s anti-AI front —

We finally culminate in a mild rebuke and constructive interrogation of the movement that saw sticky notes plastered to the walls and hung precariously like spiders in every dark corner and crevasse of the school. Regarding them, a question has sat in the back of my mind and those of many other students – what exactly was the protest about?

Surely it cannot have been the technology itself. Yet – whether or not this was intentional – it has been taken as such. As a political heuristic, protests accomplish the most when they are limited to policy objectives or social ills caused by something that may be redressed, yet this redressability issue is precisely why the thing appeared so disorganized: the school must have some purview to change things. Right?

It was wholly unclear as to what, other than an ecstatic love letter to the freedom of expression, was desired by the group or individual that LVC or the students at large could meaningfully improve upon. Was it frustration over the imminent construction of a data center in South Annville? Was it an urging of state lawmakers to adopt stricter rezoning requirements for such a thing? Was it pleading for some type of school-wide policy that is more punitive than current diffuse allowances?

Many students had little idea and lampooned the protest on Yik Yak, with anonymous posts suggesting the entire display was “kinda inconvenient” and asking “what are we really proving here?” to which they were pedantically reminded that inconvenience is indeed the point of any protest with no further clarification. A similar melee was had on separate posts discussing the factual grounds for the protest and what all students would hypothetically come for if they “organized an anti-AI protest” and held it as a “rally on the quad…,” because they “can’t keep doing nothing [sic].”

What’s bothersome – and revealing – about this confusion is the wafting fetor of neo-luddism on the part of the organizers. The philosophical mire of the AI and anti-AI debate that campus has recently been plunged into is backgrounded by a widely-accepted, highly-educated guess that AI is, in fact, inevitable, at least in a societal-staying-power sense.

Currently, the federal government maintains a tight grip on GPUs produced by private firms like NVIDIA via export controls, is attempting to secure domestic supply chains for rare-earth minerals used in chip manufacturing, and aggressively protects the IPs of large AI trusts like Anthropic and OpenAI against foreign competition. The United States military actively awards contracts to integrate private AI technology with military command structures and interfaces. Rather than being indicative of something with no staying power, this trend is patently illustrative of the government’s (and most of its elected representative’s) expectation that nearly unfettered AI advancement is and will continue to be a national security imperative for the foreseeable future.

As a bit of a reductio, assuming AI is to be phased out of the private and public marketplaces over the coming years, at the behest of protestors, nearly every company with even modest AI integration would become inefficient, overvalued, unable to provide returns, and cause futures to tumble. As a gentle reminder, the sudden or phased absence of a technology potentially erasing decades of stock market gains and violently shifting national security strategy usually means it is… inevitable.

Back on Yik Yak, amid rants about religion and DIY recipes for chicken parmesan at Mund, an anonymous user asked for thoughtful opinions on the topic of AI use, to which several were given. Each one, by virtue of its anecdotal specificity yet obvious throughline, motions at the same fatal disorganization plaguing the protest movement.

“It’s crazy that everybody here is paying so much just to have professors use AI to make assignments that students use AI to do,” lamented one commenter, “…it’s actually making us less smart and less good at careers [sic].” A psychology major I spoke to supported this position, suggesting that they have personally observed professors (not necessarily in the major) flagrantly using chatbots to generate content and answer questions without citations. They also noted that discussion posts are largely driven by students using AI and joked that it would be catastrophic to the student-teacher relationship if either discovered text humanizers at any appreciable scale.

The irony had become palpable and was seized upon by another Yik Yak user in a small dissertation on the reasoning ability of AI. “Not enough people realize at its core AI is not capable of thinking…,” they mused, “… everyone uses it as a substitute for thought and effort in a way that diminishes peoples [sic] ability to research drastically” though it still remains an “emerging technology with legitimate use-cases.” Totaling 31 and 37 upvotes respectively, this response and the one above both surpassed the original question and were extraordinarily well-received by the community.

Evidentially, the important temperature check the anti-AI protestor(s) neglected to run on the student body was one that reveals deep-seated mistrust, not in the technology itself, but in the specific ways it is permissibly or unquestioningly used in the classroom. Now this is a redressable issue and one that can be argued effectively with countless testimonials, concrete examples, a large body of studies and statistics, and broad support for articulable policy. This is something Lebanon Valley has definite control over and something it should take seriously in the immediate future, lest they want to be scraping more used stickies off chalkboards and desk chairs.

Be the first to comment